The AI landscape has never moved faster or felt more overwhelming. Every week brings new models, new frameworks, and new papers that rewrite what we thought we knew. So where do you actually start?

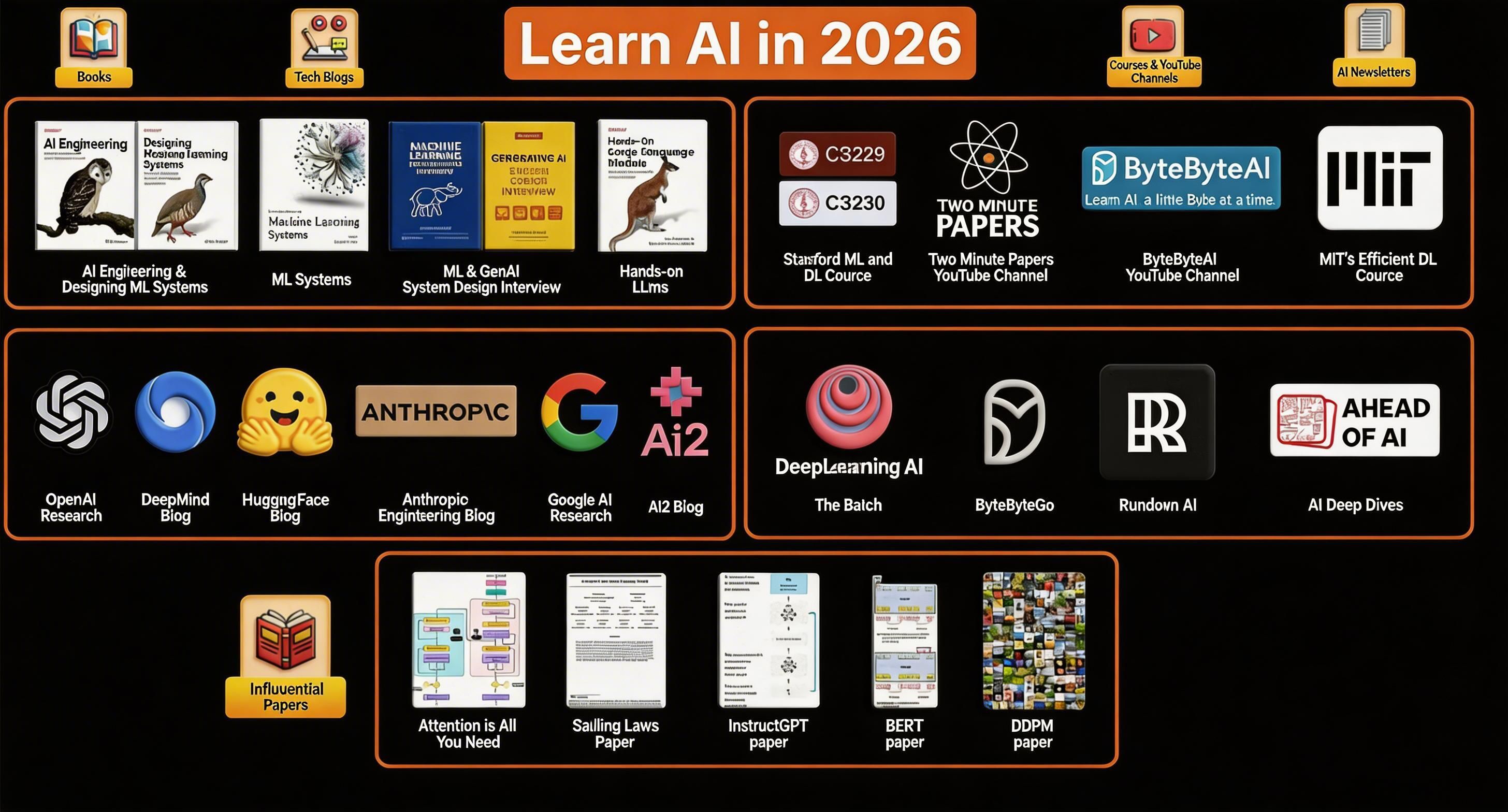

We curated 26 of the best resources across five categories: foundational books, research blogs, courses, newsletters, and landmark papers. Whether you're just starting out or deepening your expertise, this list is your roadmap.

Bookmark this. Share it. Come back to it. Let's get into it. ↓

📚 01 : Foundational & Modern Books

These books cover both timeless ML principles and the latest patterns in building production AI systems. Read them in order or pick what matches where you are.

🦉 AI Engineering 👉 Get the book →

Chip Huyen's guide to building applications with foundation models. Essential reading for any engineer working with LLMs in production.

🏗️ Designing Machine Learning Systems 👉 Get the book →

The definitive guide to building ML systems that actually work in the real world from data pipelines to deployment and monitoring.

⚙️ Introduction to Machine Learning Systems 👉 Get the book →

A practical primer on how ML systems are designed, deployed, and scaled. Great for bridging the gap between research and engineering.

🎯 ML & GenAI System Design Interview 👉 Get the book →

Two books in one covers classical ML system design and GenAI-specific patterns. Invaluable if you're preparing for senior ML/AI roles at top companies.

🦘 Hands-on Large Language Models 👉 Get the book →

From tokenization to fine-tuning — the most practical, hands-on LLM book available. If you read one LLM book, make it this one.

📡 02 : Research & Engineering Blogs

Stay ahead of the curve. These are the primary sources — where breakthroughs are announced, architectures are explained, and engineering decisions are unpacked. Follow all six.

⚪ OpenAI Research 👉 openai.com/research

GPT, DALL·E, Whisper, Sora — the source of some of the most influential AI research of the last decade. Follow for model releases and technical deep-dives.

🌀 DeepMind Blog 👉 deepmind.google/research

AlphaFold, Gemini, AlphaCode — DeepMind is leading some of the most ambitious AI research on Earth. Their blog explains the ideas behind the breakthroughs.

🤗 HuggingFace Blog 👉 huggingface.co/blog

Open-source AI at its best. Covers model releases, fine-tuning guides, dataset work, and community research — practical and genuinely accessible.

🟣 Anthropic Engineering Blog 👉 anthropic.com/research

The team behind Claude publishes deep research on AI safety, Constitutional AI, interpretability, and scaling. Thoughtful, technical, and worth every minute.

🔵 Google AI Research 👉 ai.google/research

Transformers, BERT, T5, PaLM — Google AI's fingerprints are on nearly every modern AI system. Follow for foundational research and product-driven breakthroughs.

🔬 AI2 Blog — Allen Institute 👉 allenai.org/blog

Independent, academic, and open-source focused. AI2 publishes on reasoning, NLP, vision, and responsible AI with a refreshingly honest voice.

"The best AI engineers aren't the ones who know the most — they're the ones who know how to learn the fastest. Build habits around these resources. 20 minutes a day compounds faster than you think."

🎓 03 : Courses & YouTube Channels

Structured learning for when you need to build real foundations — and free channels for staying sharp on the latest research without reading 50-page papers.

🏛️ Stanford CS229 👉 cs229.stanford.edu — Free

Machine Learning Andrew Ng's legendary ML course. The math, the intuition, the algorithms — all of it. Free lecture notes and materials available. The gold standard for ML foundations.

🧠 Stanford CS230 — 👉 cs230.stanford.edu — Free

Deep Learning The deep learning companion to CS229. CNNs, RNNs, transformers — taught with rigor and real-world applicability. Take CS229 first.

☢️ Two Minute Papers (YouTube) 👉 youtube.com/@TwoMinutePapers

"What a time to be alive!" — Károly Zsolnai-Fehér breaks down the latest AI papers into 2–5 minute videos. Addictive, accessible, and genuinely exciting.

📦 ByteByteAI (YouTube) 👉 youtube.com/@ByteByteAI

Visual explanations of complex AI and system design concepts — "Learn AI, a little Byte at a time." Perfect for engineers who think in diagrams.

⚡ MIT's Efficient Deep Learning Course 👉 MIT HAN Lab — Free Materials

How do you make AI models faster, smaller, and cheaper to run? Covers pruning, quantization, distillation, and hardware-aware training. Highly practical.

The fastest way to stay informed without doomscrolling. These newsletters filter the noise and deliver what actually matters — straight to your inbox.

🔴 The Batch — DeepLearning.AI 👉 deeplearning.ai/the-batch

Andrew Ng's weekly newsletter. Curated AI news, editorial perspective on trends, and research highlights. The most trusted voice in AI education — free, every week.

🗺️ ByteByteGo Newsletter 👉 blog.bytebytego.com

System design + AI, explained visually. One of the best newsletters for engineers who want to understand how modern AI systems are actually built and scaled.

⚫ Rundown AI 👉 therundown.ai — Free, Daily

5-minute daily digest of the biggest AI news. Clean, fast, and no fluff. Over 500K subscribers read this every morning to stay on top of the field.

📖 Ahead of AI — Sebastian Raschka 👉 magazine.sebastianraschka.com

Deeply technical, long-form breakdowns of AI papers and trends. Subscribe if you want to go beyond the headlines and actually understand what's changing and why.

📄 05 : The 5 Papers That Changed AI Forever

You don't need to read every paper. But these five changed the course of AI history. Read them once and you'll understand why everything in modern AI looks the way it does.

Paper 01: "Attention Is All You Need" 👉 Read on arXiv →

Vaswani et al., Google 2017. Introduced the Transformer — the architecture behind GPT, BERT, and virtually every modern LLM. The most cited AI paper of all time.

Paper 02: Scaling Laws for Neural Language Models 👉 Read on arXiv →

Kaplan et al., OpenAI 2020. Revealed how model performance scales predictably with compute, data, and parameters. The paper that launched the scaling era.

Paper 03: InstructGPT 👉 Read on arXiv →

Ouyang et al., OpenAI 2022. Explained how RLHF aligns language models to follow instructions. The method behind ChatGPT and every modern AI assistant.

Paper 04: BERT 👉 Read on arXiv →

Devlin et al., Google 2018. Bidirectional pre-training for language understanding. Revolutionized NLP benchmarks and showed the power of pre-train + fine-tune.

Paper 05: DDPM (Denoising Diffusion Probabilistic Models) 👉 Read on arXiv →

Ho et al., 2020. The foundational paper behind Stable Diffusion, DALL·E 2, and Midjourney. Introduced the diffusion framework that powers all generative image AI.

Enjoy discovering powerful newsletter?

Follow me across all platforms for more tools, AI experiments, and hidden corners of the internet:

📱 All Socials: MrWebsiter/ @mrwebsiter

Want to sponsor this newsletter?

If you’d like to promote your product, tool, or website to this audience, email: